Key Takeaways

- Monitoring LLMs is crucial for reliable, high-quality, and safe outputs as LLMs are deployed in increasingly complex and sensitive scenarios.

- Best LLM monitoring tools like KPI.me provide features like performance tracking, cost management, and integration options — all with their respective pros and cons.

- Evaluating critical monitoring capabilities—like performance metrics, cost tracking, and quality assurance—helps organizations make informed decisions to optimize both efficiency and compliance.

- Integrating user feedback and strong data privacy protections builds trust, increases LLM precision, and complies with global data regulations.

- Choosing the right tool involves thinking about integration with your current infrastructure, scalability for future needs, and intuitive interfaces for smooth workflows.

- Human oversight is still pivotal in LLM monitoring, facilitating ongoing enhancement and the capability to tackle intricate or subtle issues that automated systems might overlook.

LLMs (Large Language Models) are amazing, but let’s be real—they don’t always get it right. From random hallucinations to oddball responses, these models sometimes need a little babysitting. That’s where monitoring tools step in.

The best LLM monitoring tools act like a safety net. They catch errors, flag unusual behavior, and give you a clear view of what’s happening in real time with easy-to-read dashboards, alerts, and logs. In short, they help keep your AI accurate, safe, and trustworthy.

In this article, we’ll break down what makes a great monitoring tool and share the top picks for 2025 that can help you keep your AI running smoothly.

Why Monitor LLMs?

Monitoring LLMs isn’t just a behind-the-scenes task; it’s a must-have for anyone relying on these tools, especially agencies and consultants. Done right, monitoring helps ensure outputs stay accurate, fair, and valuable.

Without it, things can quickly go wrong. LLMs might contradict themselves, drift into biased or toxic responses, or simply produce errors that damage trust. These models are powerful, but also risky—and keeping an eye on them makes all the difference.

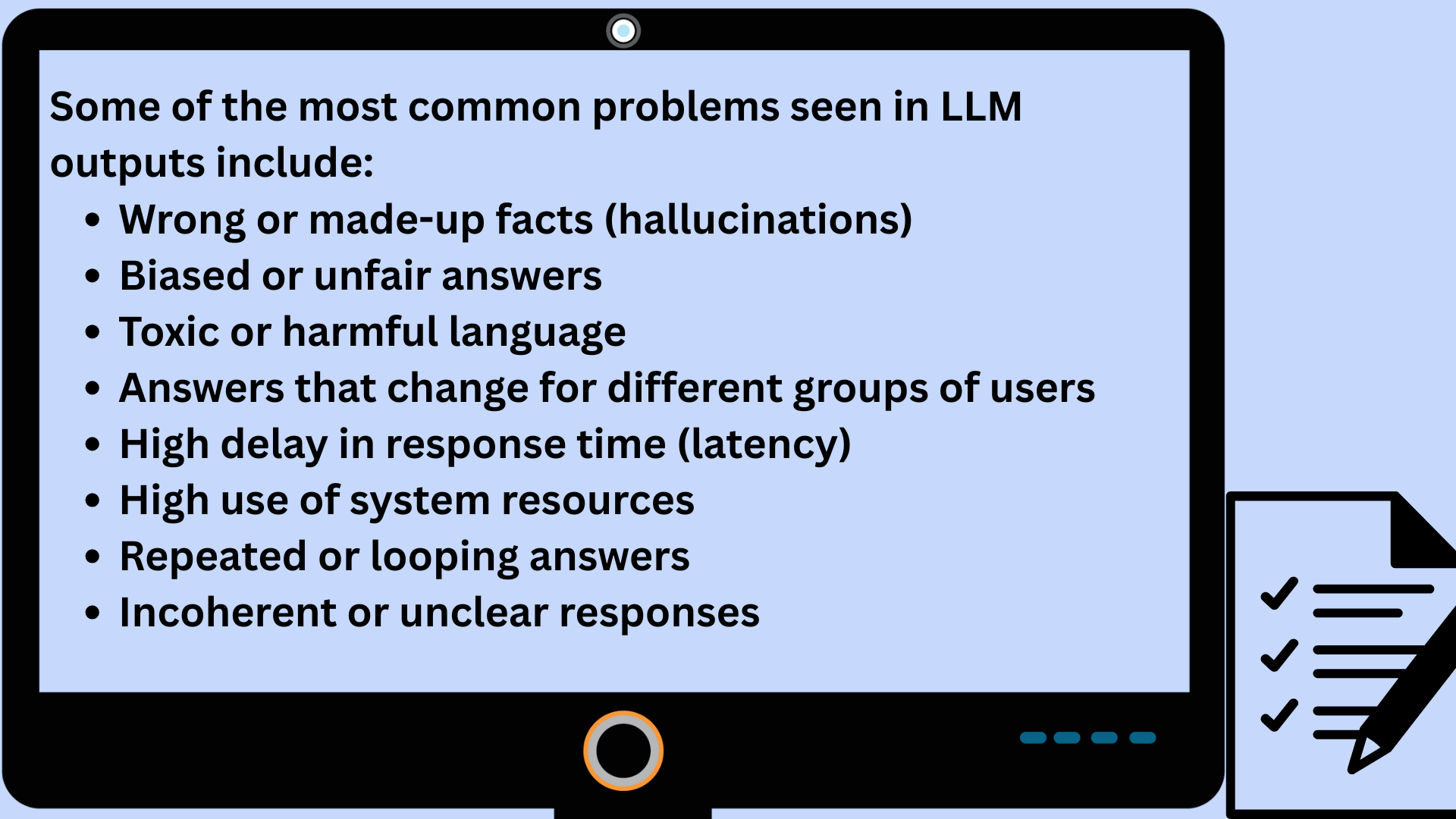

LLMs, intrinsically, are intricate and can exhibit a wide variety of problems.

Monitoring LLMs isn’t just fixing bugs—it’s about keeping AI trustworthy, fair, and compliant. Without it, models risk biased answers, contradictions, or even leaking sensitive data.

Why monitoring matters:

- Protects user trust and avoids compliance issues

- Catches risky or biased outputs early

- Highlights where users struggle or get poor answers

- Provides data to optimize accuracy, speed, and consistency

Fairness & compliance:

- Ensures all users get equal treatment

- Helps meet strict data regulations across regions

- Creates transparency for regulators and clients

Automation + human review:

- Automated tools catch issues at scale

- Humans spot nuance (like sarcasm or tone) that AI misses

- Together, they build more reliable AI for SEO, marketing, and content creation

Best LLM Monitoring Tools in 2025

Monitoring large language models (LLMs) goes beyond uptime checks. It’s about making sure your AI delivers reliable, unbiased, and safe responses. The right tool can help you detect hallucinations, flag anomalies, and optimize performance in real time.

Here are some of the best tools worth checking out in 2025:

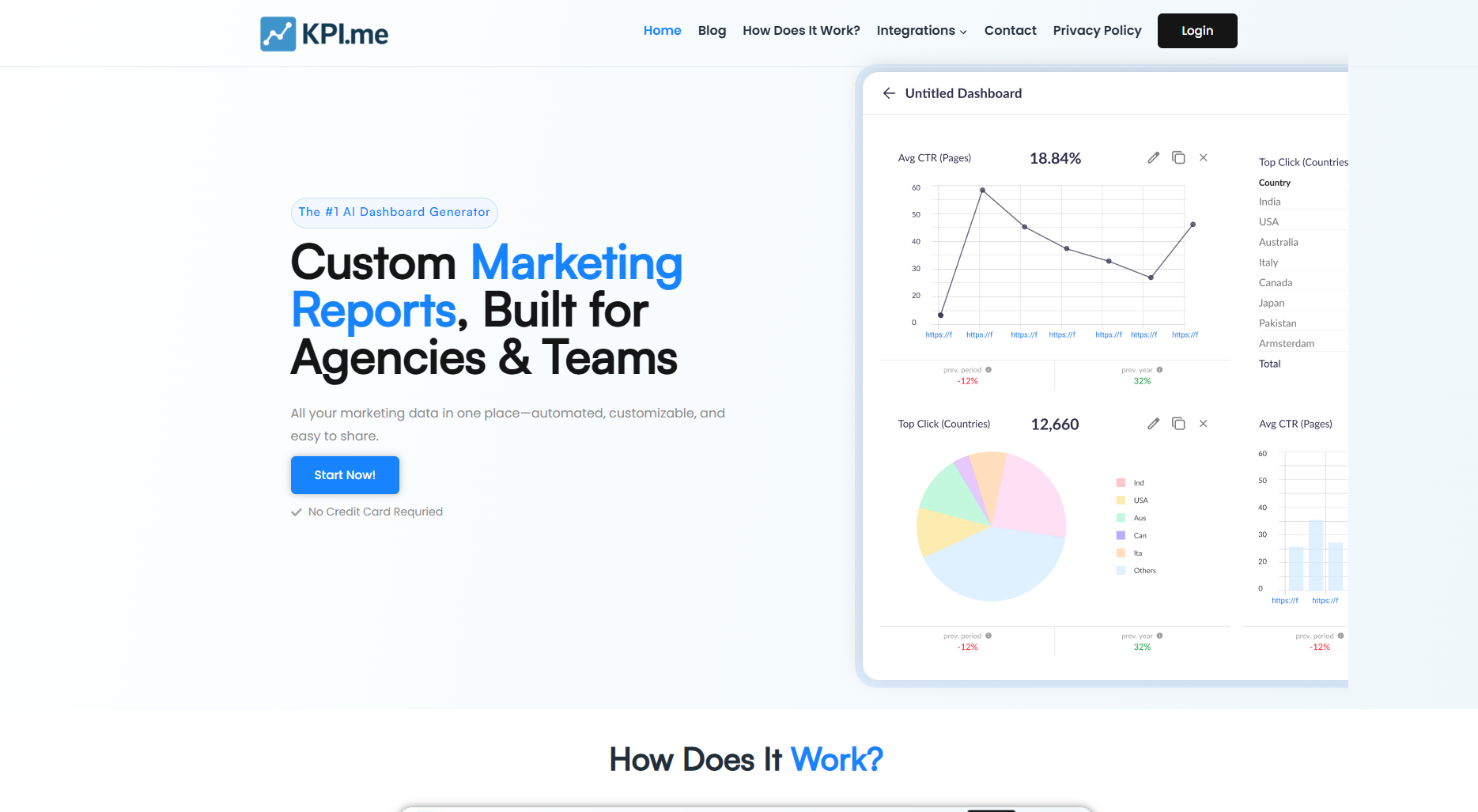

1. KPI.me

A rising star designed for teams who want clear, no-fuss monitoring. KPI.me offers customizable dashboards, prompt templating, and version testing so you can track what matters.

Best for: Agencies and consultants who want simplicity and speed

Watch out for: Limited flexibility if you need very niche metrics

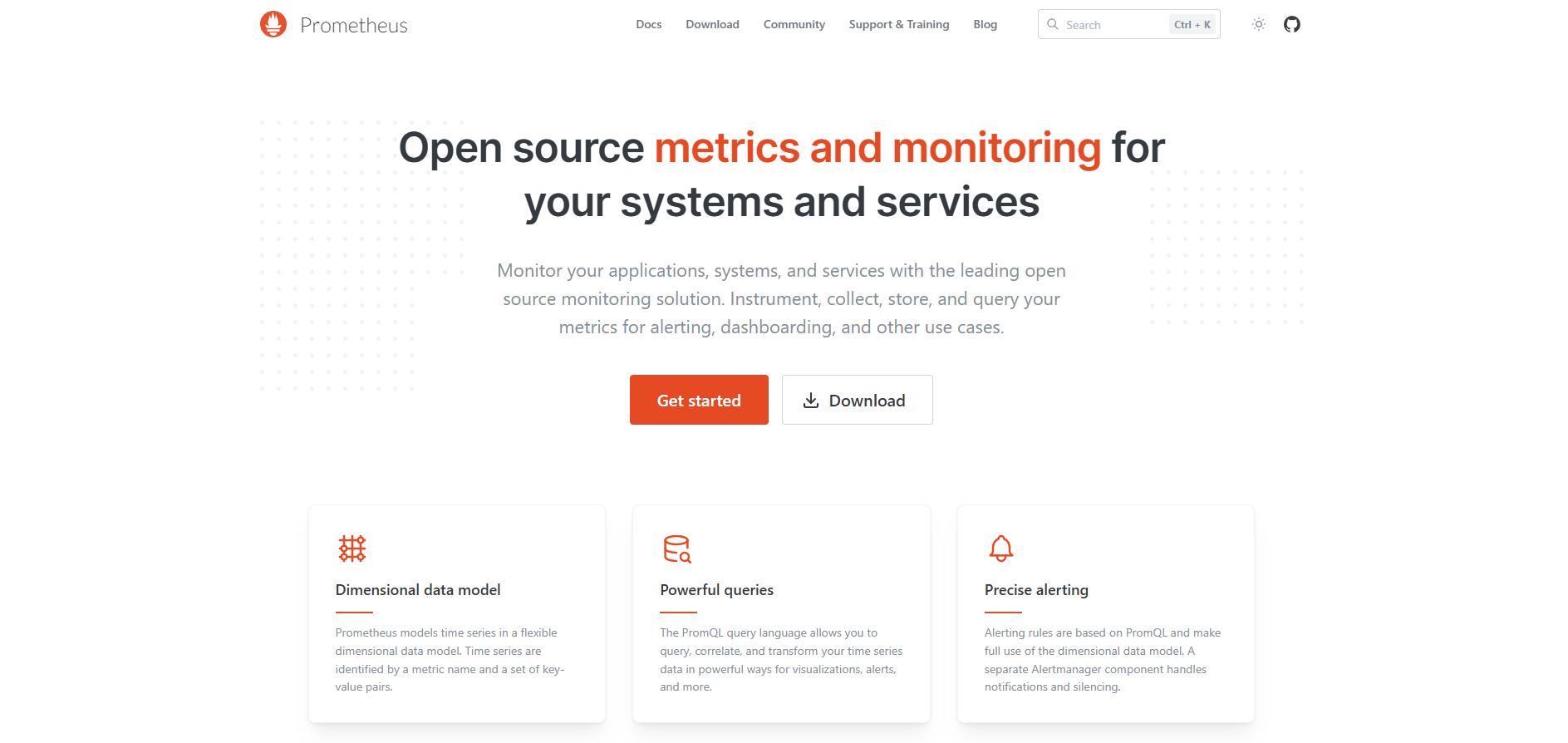

2. Prometheus

An open-source classic for large-scale systems. Prometheus excels at collecting massive amounts of data and works well with distributed LLM deployments.

Best for: Tech-heavy teams with scaling needs

Watch out for: Requires manual setup for LLM-specific monitoring

3. Grafana

Pairs beautifully with Prometheus and turns raw data into visual dashboards your team will actually want to look at.

Best for: Teams that value visualization and collaboration

Watch out for: Needs additional LLM logic to be fully useful

4. Datadog

A managed, all-in-one monitoring platform that integrates smoothly with LLMOps workflows. It handles logging, tracing, alerting, and even prompt testing.

Best for: Companies wanting a plug-and-play option

Watch out for: Costs rise fast as usage scales

5. Arize AI

Purpose-built for ML and LLM monitoring, Arize AI focuses on observability, fairness, and bias detection. It helps you track embeddings, catch drift, and run deep troubleshooting.

Best for: Teams prioritizing fairness, bias checks, and root cause analysis

Watch out for: More advanced setup, may be overkill for smaller projects

6. Langfuse

An open-source monitoring tool built specifically for LLM applications. Langfuse offers prompt tracing, evaluation, and real-time insights designed with developers in mind.

Best for: Developers building and testing LLM apps

Watch out for: Still evolving—some enterprise-level features are limited

7. Helicone

A developer-friendly tool that sits between your app and OpenAI (or other LLM APIs) to log and monitor requests. Offers analytics, cost tracking, and insights into usage patterns.

Best for: Startups and dev teams monitoring API-based LLM usage

Watch out for: Works best if your stack relies on API calls, less suited for custom LLM deployments

Core Monitoring Capabilities

Robust LLM monitoring is all about monitoring the right things at the right time. The entire idea is to understand how these models operate, detect problems, and correct them quickly. Core features provide more than just data logging—they provide visibility into what’s going on under the hood, from model performance to cost and user experience.

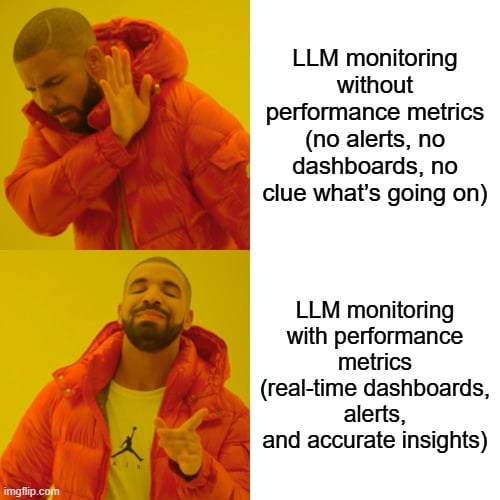

1. Performance Metrics

Performance metrics are the lifeblood of LLM monitoring. Teams examine how quickly a model answers, how accurate it is, and how frequently it scores for context relevance. Dashboards provide an immediate method to observe fluctuations and identify patterns, such as a sudden drop in answer speed or a surge in incorrect answers.

Automated checks — running test prompts, measuring response times — keep tabs on how the model deals with real-world tasks. An effective monitoring system should allow you to configure alerts. For instance, if the share of voice drops by 20% or there’s a new surge of negative feedback, teams are alerted.

Granular metrics, such as spans for each model task, help you break down each step to determine where things go awry, making problems easier to fix quickly.

2. Cost Tracking

Cost tracking is tracking every cent spent on model runs, tokens, or infrastructure. They demonstrate where the majority of spending is occurring and help identify patterns such as specific prompts that consume more resources than others. Along with granular cost views, agencies can establish benchmarks and monitor ROI.

This matters if you run many LLMs at scale or operate on lean client budgets. Sensing cost spikes early means you can adjust configurations or prompts to economize. Other times, simply toggling to less expensive model variants or altering token limits saves big.

Good tools allow you to experiment with these tweaks and observe the effect live.

3. Quality & Safety

LLM outputs should be transparent, reliable, and valuable. Monitoring is largely about looking for bias, harmful content, or irrelevant responses. Performing audits on a regular basis catches issues prior to reaching end users. Safety checks are important, particularly for outward-facing chatbots or tools in highly regulated areas.

Others employ frameworks that score responses for moral and safety. Others vet LLM answers by subjecting them to additional filters. Either way, maintaining a high bar for output quality safeguards both brand and users.

4. User Feedback

User feedback is a goldmine for fixing LLM flaws. Open feedback channels provide actual users with a voice. Teams leverage this feedback to identify what’s working and what requires attention. Worming into feedback reveals irritants, such as mystifying solutions or tardy responses.

Fast surveys or in-app ratings can point the path. Quick, candid user stories help craft improved prompts and increase credibility.

5. Data Privacy

LLM observability must respect privacy standards. Don’t ever forget robust data protection to protect user information. A look back at data storage and usage is a must. Teach teams and clients why privacy checks matter.

Key Features to Look For

When choosing an LLM monitoring tool, keep an eye out for:

- Cosine similarity & perplexity tracking → detect model drift

- Sentiment & bias detection → ensure fairness in responses

- Tracing & versioning → test prompts and measure consistency

- Dashboards & alerts → spot issues in real time

Scalability

Growth introduces fresh challenges. Observability tooling needs to scale as your LLM footprint does. Here’s a comparison:

|

Tool |

Scaling Options |

Best For |

|---|---|---|

|

Quick setup, customizable dashboards |

Agencies & consultants who want simplicity |

|

|

Prometheus |

Horizontal scaling, multi-node |

Large, technical teams needing open-source flexibility |

|

Grafana |

Integrates with Prometheus & others |

Teams focused on visualization & reporting |

|

Datadog |

Cloud-native scaling, all-in-one |

Enterprises wanting plug-and-play monitoring |

|

Arize AI |

Enterprise-grade scalability |

Teams prioritizing fairness, bias detection & drift tracking |

|

Langfuse |

Cloud auto-scale, open-source flexibility |

Developers building & testing LLM apps |

|

Helicone |

API-focused scaling, lightweight |

Startups & dev teams tracking LLM API usage |

Think in advance. Pound test tools with real loads to see how they sustain. Flexible pricing, such as free or minimal paid tiers, allows you to test drive before you buy.

The Human-in-the-Loop Imperative

LLMs are smart, but they miss things—subtle mistakes, cultural bias, or context only humans can catch. That’s where human-in-the-loop (HITL) comes in.

Why it matters:

-

In healthcare, law, or finance, even a tiny error can be costly.

-

Humans add what AI can’t: context, intuition, and lived experience.

-

Example: an AI might miss culturally biased phrasing, but a human would flag it immediately.

How it helps:

-

Reviewers catch errors before they cause damage.

-

Feedback loops make models smarter—e.g., customer service agents flagging bad chatbot answers so the bot improves over time.

-

Teams can quickly troubleshoot issues in real-world use.

What’s needed:

-

Trained reviewers who know what to look for

-

Clear tasks and systems for giving feedback

-

Processes to feed that feedback back into the model

The trade-off: Yes, HITL takes more time and people. But the result—trustworthy, higher-quality AI—is worth it.

Future of LLM Observability

As LLMs power more real-world apps, observability has become a must-have. It’s not just about outputs—it’s about knowing why models succeed or fail.

Where it’s headed:

- AI-powered monitoring → detects slowdowns, cost spikes, or risky outputs before users notice

- End-to-end tracing → visibility from prompt → response → real-world use

- Key metrics → latency, token spend, accuracy, and relevance tied to business goals

- Open standards → tools like OpenTelemetry make it easier to connect data across systems

Platforms like KPI.me already provide real-time dashboards and alerts, showing where the future is headed.

Bottom line: Teams that adopt smarter, real-time observability will keep their models accurate, cost-efficient, and trustworthy.

Conclusion

LLM monitoring isn’t just a safeguard—it’s the key to making AI reliable, fair, and future-ready. The best tools don’t just spot bugs; they reveal insights, reduce bias, and keep conversations flowing smoothly. Platforms like Prometheus, Grafana, and KPI.me each bring unique strengths, giving teams options that fit their needs and expertise.

With the right system in place, you’ll catch issues before users ever notice, build trust with transparent checks, and stay ahead as new features and fixes roll out.

At the end of the day, it’s about choosing a tool that fits your team’s rhythm—and using it to turn complexity into clarity.

Want expert guidance on making the most of LLMs? The team at SirLinksalot is here to help you cut through the noise and focus on what matters.

Frequently Asked Questions

What is LLM monitoring?

LLM monitoring monitors the performance, safety, and behavior of large language models. It allows you to troubleshoot, maintain compliance, and optimize user experience by monitoring model outputs.

Why do organizations need LLM monitoring tools?

LLM monitoring tools assist in detecting errors, biases, or security threats in language model responses. They guarantee models behave as expected and shield organizations from unforeseen repercussions.

What core features should LLM monitoring tools have?

Must-haves: real-time tracking & alerting, data logging, bias detection, and user feedback. These assist squads stay in charge and boost model dependability.

Are LLM monitoring tools necessary for all industries?

Any industry deploying language models can make use of monitoring tools. They help ensure accuracy, compliance, and user safety across industries such as healthcare, finance, and education.

Can LLM monitoring tools detect harmful content?

Some LLM oversight instruments are capable of alerting to or preventing harmful, prejudiced, or unsuitable results. This shields users and complies with international safety regulations.

How do I choose the best LLM monitoring tool?

Think about your particular requirements – like integration, scalability, data privacy, and human review support. Check out the best LLM monitoring tools.

What is the role of human-in-the-loop in LLM monitoring?

Human-in-the-loop refers to actual humans inspecting and enhancing model outputs. This guarantees more precise, ethical, and nuanced case management.